< Back to news

For computational linguists like Fernández, large language models suddenly offer a new tool to quantify all kinds of properties of human dialogues and test whether certain hypotheses about human language use are correct. For example, one of the theories from psycholinguistics is that people unconsciously adapt their language use in such a way that their conversation partner can understand them with as little effort as possible. For example, by making a sentence shorter, or by using simpler words or simpler constructions. Fernández: 'With these powerful language models we can to a certain extent quantify how people use language. Then we see that people do indeed try to speak in such a way that the other person understands them with minimal effort. But we also see that for some sentences and for some language use the models underestimate that effort. That's because large language models are trained on many more texts than you and I can ever read.'

Although large language models are great at generating language, it is difficult to get them to do a specific task, such as booking a restaurant or a ticket. Fernández: 'Language models generate what is most likely and they are not trained to work with you to achieve the goal you have in mind. To achieve this, the system must know what the goal is and how it can achieve it. That is a major challenge at the moment.'

Read the entire article here.

Published by the University of Amsterdam.

14 February 2024

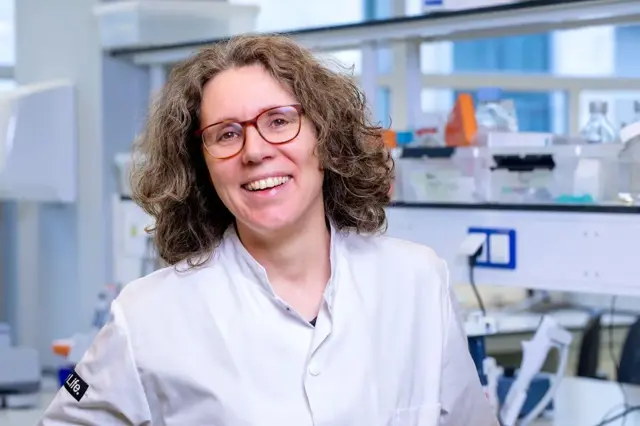

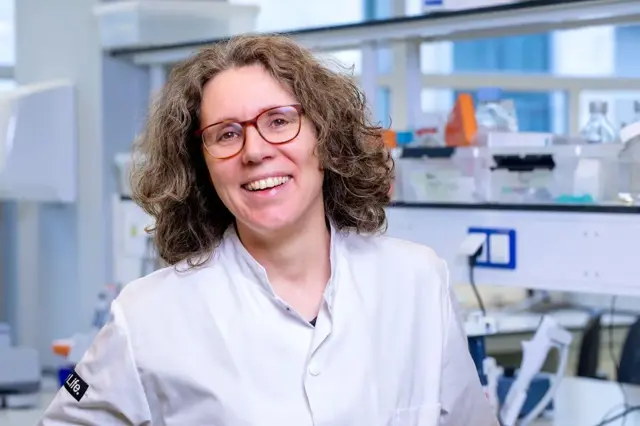

Raquel Fernández tries to make chatbots more human

Last year, AI systems that write human texts made their breakthrough worldwide. Yet many scientific questions about how exactly they work remain unanswered. The UvA asked three UvA researchers how they try to make the underlying language models more transparent, reliable and human. This week, colleague Raquel Fernández talks about her research.

UvA professor of Computational Linguistics & Dialogue Systems Raquel Fernández, affiliated with the ILLC, tries to bridge the gap between large language models and the way people use language. Fernández, who leads the Dialogue Modeling research group: 'I am interested in how people talk to each other and how we can naturally transfer this ability to machines.'

For computational linguists like Fernández, large language models suddenly offer a new tool to quantify all kinds of properties of human dialogues and test whether certain hypotheses about human language use are correct. For example, one of the theories from psycholinguistics is that people unconsciously adapt their language use in such a way that their conversation partner can understand them with as little effort as possible. For example, by making a sentence shorter, or by using simpler words or simpler constructions. Fernández: 'With these powerful language models we can to a certain extent quantify how people use language. Then we see that people do indeed try to speak in such a way that the other person understands them with minimal effort. But we also see that for some sentences and for some language use the models underestimate that effort. That's because large language models are trained on many more texts than you and I can ever read.'

Although large language models are great at generating language, it is difficult to get them to do a specific task, such as booking a restaurant or a ticket. Fernández: 'Language models generate what is most likely and they are not trained to work with you to achieve the goal you have in mind. To achieve this, the system must know what the goal is and how it can achieve it. That is a major challenge at the moment.'

Read the entire article here.

Published by the University of Amsterdam.

Vergelijkbaar >

Similar news items

September 9

Multilingual organizations risk inconsistent AI responses

AI systems do not always give the same answers across languages. Research from CWI and partners shows that Dutch multinationals may unknowingly face risks, from HR to customer service and strategic decision-making.

read more >

September 9

Making immunotherapy more effective with AI

Researchers at Sanquin have used an AI-based method to decode how immune cells regulate protein production. This breakthrough could strengthen immunotherapy and improve cancer treatments.

read more >

September 9

ERC Starting Grant for research on AI’s impact on labor markets and the welfare state

Political scientist Juliana Chueri (Vrije Universiteit Amsterdam) has received an ERC Starting Grant for her research into the political consequences of AI for labor markets and the welfare state.

read more >